Podcast: Play in new window | Download

In January, OpenAI, developer of ChatGPT, launched ChatGPT Health, one of many patient-facing generative artificial intelligence (AI) tools in various stages of development.

From educating patients on women’s sexual health and hip replacement surgery to generating postoperative instructions and digitizing informed consent, the potential medical applications of generative AI tools for the public are vast. In general, their goal is to increase patients’ comprehension of complex medical information and, in the case of ChatGPT Health, provide personalized information based on individual users’ own data. In the not-too-distant future, some experts predict new AI technologies will be able to independently make decisions about patient care.

At their most sophisticated, though, these technologies should serve as a “clinician extender,” not a clinician replacer, said cardiologist Haider Warraich, MD, a program manager at the US government’s Advanced Research Projects Agency for Health (ARPA-H) who previously helped shape digital health and AI policy at the US Food and Drug Administration (FDA).

“I hate the term AI doctor,” Warraich said. “There’s a lot more to me than what these technologies can do.”

There’s more than one reason why using an AI chatbot for health advice is not the same as consulting a physician. Recent studies have raised questions about the accuracy of health information provided by chatbots, and physicians and consumers have expressed concerns over the sharing of personal medical data with large language models (LLMs) that aren’t covered by the Health Insurance Portability and Accountability Act (HIPAA).

ChatGPT Health failed to properly triage the most and the least serious cases in what might be the first study to assess the new tool’s performance, according to an accelerated preview of the article published in late February. The authors, who tested the chatbot using vignettes written by physicians, noted that under-triage of emergency conditions may delay or preclude lifesaving treatment, while over-triage of nonurgent presentations may increase health care utilization.

But LLMs hold promise as a way of expanding access to medical expertise or, at the very least, preparing patients to make the best use of visits with their physicians. “There’s a reason patients want to use these models,” said radiation oncologist Danielle Bitterman, MD, clinical lead for data science and AI at Mass General Brigham. “It’s so hard to access health care right now.”

Of the 800 million users of ChatGPT each week, 1 in 4 seek health-related information, according to Nate Gross, MD, MBA, who leads health care strategy at OpenAI, which developed the chatbot.

“We said, ‘Hey, let’s build some differences to the product to make it a more contextually aware experience,’” as well as one with additional privacy and security connections, he recalled.

Users of “vanilla” ChatGPT, as Gross describes the forerunner of ChatGPT Health, can upload a physician’s note or copy laboratory results from their patient portal, he explained, but those bits of information lack context. “Just uploading a really short doctor’s note could be interpreted very differently if you’re age 20 or age 70.”

ChatGPT Health, on the other hand, invites users to upload all their personal health information, including laboratory test and imaging results as well as data collected by their Apple watch.

Although OpenAI consulted with hundreds of physicians from around the world to improve its models, ChatGPT Health is not designed to play doctor, Gross emphasized.

“We train our models specifically to guide patients to health care professionals for diagnosis and treatment,” he said. “We’re looking to give people information, not tell them if they’re sick, not tell them if they’re healthy. We’re a partner to the health care system in that regard.”

By late February, ChatGPT Health was not yet available to all comers; prospective users could add their name to a waitlist for using the chatbot. OpenAI declined to say how many people have used ChatGPT Health so far.

Privacy is one of users’ main concerns about ChatGPT Health and other LLMs that allow people to upload personal health information.

Elon Musk recently suggested in an X post that “[y]ou can just take a picture of your medical data or upload the file to get a second opinion from Grok,” an AI chatbot developed by his company, xAI.

Commenters were aghast at the idea. One decided to ask Grok’s opinion and posted its reply: “Grok is not HIPAA compliant, and we strongly advise against uploading sensitive medical data.”

Gross acknowledged that ChatGPT Health isn’t HIPAA compliant either. That’s not due to negligence, he pointed out, but because ChatGPT Health, like Grok, is not an entity covered by HIPAA, such as a physician or health insurance plan, or a business associate of a covered entity.

“They are not held to the same legal requirements that doctors and health care institutions are,” Bitterman said of the AI companies.

ChatGPT Health “is building on a lot of very proprivacy protections that ChatGPT already had, with additional layers of protection,” Gross said. “We wanted to set a really high bar.” For example, he noted, OpenAI will not include any ChatGPT Health conversations among the data it uses to train the LLM. And, he explained, as with ChatGPT, ChatGPT Health users can opt to make chats temporary, meaning they won’t appear in their history and ChatGPT Health won’t save them.

Even so, “those assurances may not be worth that much if companies get sold,” pointed out David Liebovitz, MD, codirector of the Institute for Artificial Intelligence in Medicine’s Center for Medical Education in Data Science and Digital Health at the Northwestern University Feinberg School of Medicine.

For now, he said, if patients asked him whether he thought they should try ChatGPT Health, he’d probably suggest “they could wait a little bit longer, when there could be more privacy-related tools.”

Even if they want to, chatbot users—especially clinically challenging patients with long, complex medical histories—can’t always upload all their medical records, Bitterman pointed out.

“It’s very hard to ensure that you have all your medical records,” she said. “Those are the missing pieces that make clinical practice hard.”

Gross acknowledged that “our health care system is very fragmented.” But, he said, if patients forget to upload records from a particular physician or hospital, their physicians’ most recent notes likely will at least mention them.

Even patients who have all the relevant information may not paint a complete picture of their situation when interacting with LLMs, concluded research published in February.

The study, led by the Oxford Internet Institute in the UK, tested whether LLMs could help individuals without medical training identify underlying conditions and choose a course of action in 10 physician-drafted health scenarios. Researchers randomly assigned 1300 participants to receive assistance from 1 of 3 LLMs or, to serve as the control, a source of their choice, which was typically Google. The 3 LLMs were ChatGPT-4o, Meta’s Llama 3, and Command R+, which was developed by Cohere, a Canada-based company.

On average, when the scientists presented the vignettes directly to the LLMs, bypassing human interaction, the chatbots correctly identified the condition 95% of the time and the appropriate course of action 56% of the time.

But when study participants presented the vignettes to the same LLMs, the chatbots correctly identified relevant conditions only about a third of the time and the appropriate course of action less than 44% of the time. The LLMs performed no better than Google did in the control group.

“The limiting factor wasn’t just the model’s medical knowledge,” coauthor Rebecca Payne, MBBS, PhD, MPH, a general practitioner at the North Wales Medical School, Bangor University, said in an email. “It was the human-AI communication loop: people providing incomplete information, the model misinterpreting key details, and, importantly, people failing to carry forward a relevant diagnostic suggestion that the model did raise during the exchange.”

Whether using an LLM or Google, study participants “tended to underestimate the severity in the vignettes we tested,” Payne said. “That raises the risk that some users may feel falsely reassured or may delay seeking care.”

Payne’s findings didn’t surprise Bitterman. “With these chatbots, it’s incumbent on the user to know what they need to provide to the model to get the best information,” she said. “Having that kind of clinical nuance requires a lot of on-the-ground training,” not just the LLMs’ training on medical literature and textbooks.

The advice she gives to patients: “Don’t take immediate action just based on what you find online. We can discuss it together.”

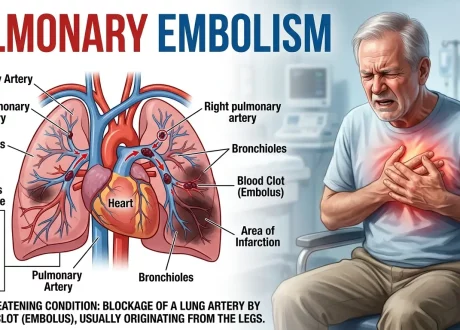

The result could be deadly if, say, a chatbot mistakenly told a user that they didn’t need to go the emergency department because their chest pain was due to indigestion, not a heart attack.

That’s why Payne advises patients to use chatbots only for low-stakes support, such as explaining medical terms, preparing questions for a clinician, and summarizing what they’ve been told. “LLMs currently perform best as ‘assistants/secretaries’ that help organize known information rather than generate high-stakes clinical interpretations,” she said.

Physicians are working on a number of generative AI applications for more focused, lower-stake purposes.

For urologist Gio Cacciamani, MD, the diagnosis of a loved one with a serious disease unrelated to his specialty gave him a taste of what patients face when trying to decipher scientific information.

“When it comes to something outside my field, it’s very challenging to read,” said Cacciamani, director of the Artificial Intelligence Center for Surgical and Clinical Applications in Urology at USC’s Keck School of Medicine. “That situation opened my eyes.”

Cacciamani discovered 2 types of medical information online—either “extremely readable but not certified,” such as blog posts, or “peer-reviewed, certified, but not readable at all,” mainly publications in scientific journals.

Generative AI “has the potential to bridge long-standing gaps between certified medical knowledge and patient understanding,” Cacciamani and coauthors noted in a commentary published in February.

Using the retrieval-augmented generation, or RAG, technique, which trains the LLM with a medically verified knowledge base, he developed a new tool that can translate and summarize abstracts and full articles. More than 6000 people have turned to Pub2Post, and some medical journals are using it for their social media posts, Cacciamani said.

Antonio Forte, MD, a plastic surgeon at the Mayo Clinic in Jacksonville, Florida, used RAG to develop an LLM virtual assistant for postoperative instructions.

Patients often are discharged after surgery while still experiencing the residual effects of anesthesia or painkillers, making it difficult to remember postoperative instructions, Forte said. And, he added, they frequently misplace printouts of the information. “That’s why we thought, ‘What if we got patients the ability 24/7 to have access to high-quality, medically verified information?’”

Using simulated patient interactions, testing the virtual assistant demonstrated strong technical accuracy, safety, and clinical relevance, albeit at a relatively high 11th-grade reading level, Forte and his coauthors recently reported.

And Bitterman has tested the ability of ChatGPT-4o and Llama 3.2-8B to answer patients’ questions about clinical trials with the goal of simplifying informed consent forms. In a recent study, she and her coauthors found that ChatGPT-4o was significantly more reliable and safer that Llama 3.2-8B in answering these queries.

In January, 2 federal agencies, both part of the US Department of Health and Human Services, launched initiatives focusing on digital health tools for patients with common, chronic conditions. One is designed to evaluate a regulatory pathway for digital health tools including LLMs, and the other aims to spur the development of an LLM for patients with heart failure.

The FDA, working with the Center for Medicare & Medicaid Innovation, announced the Technology-Enabled Meaningful Patient Outcomes (TEMPO) for Digital Health Devices Pilot.

According to the FDA, the voluntary pilot will evaluate a new enforcement approach “that supports digital health devices intended for use to improve patient outcomes in cardio-kidney-metabolic, musculoskeletal, and behavioral health conditions.”

The FDA has not yet authorized any LLM, Warraich said. Generative AI applications such as LLMs “present a unique challenge because of the potential for unforeseen, emergent consequences,” according to a Special Communication he coauthored in JAMA in 2024.

Today, Warraich is leading a new ARPA-H initiative whose goal is the development of new LLM systems that are ready for submission to the FDA within 2 years for authorization as medical devices. The Agentic AI-Enabled Cardiovascular Care Transformation (ADVOCATE) program “aims to transform advanced cardiovascular disease management with an agentic AI system that can provide 24/7 holistic clinical care.”

“I believe that as AI presents an opportunity to fundamentally transform what it means to be a clinician, a patient, and the relationship between them, cardiology will be at the tip of the spear…,” Warraich noted in an opinion piece published in February in the Journal of the American College of Cardiology.

The first use for technologies developed through ADVOCATE will be providing care for patients with congestive heart failure. If a patient is feeling short of breath, for example, the technology will decide if the patient should go to the emergency department and whether they might need a new prescription or a higher dose of a current medication, Warraich explained. Along with developing AI agents that can be trusted to make such changes autonomously, ADVOCATE will also support the creation of a supervisory AI “overseer” to monitor the safety and effectiveness of clinical AI agents after they’ve been deployed by health systems.

Given that ChatGPT is only 3 years old, the rapid development of new generative AI applications for patient use may seem like science fiction. As Bitterman said, “This is so far beyond what I would have predicted 5 years ago.”

Published Online: March 6, 2026. doi:10.1001/jama.2026.1122

Conflict of Interest Disclosures: Dr Bitterman reported serving as an associate editor of JCO Clinical Cancer Informatics, Annals of Oncology, and radiation oncology for HemOnc.org. She also reported receiving consulting fees from Inspire Exercise Medicine LLC and honoraria from Harvard Medical School, Med-IQ, and the National Comprehensive Cancer Network and serving as a scientific advisory board member for Blue Clay Health LLC and Mercurial AI. Dr Liebovitz reported receiving research grants from Children’s Hospital of Philadelphia, the FDA, Merck Sharp & Dohme, the National Institutes of Health, the National Science Foundation, and the University of Chicago. He also reported that he has an ownership or investment interest in CodeAccelerate, Dendritic Health AI, KYRAL Inc, and Optima Integrated Health Inc. Dr Cacciamani reported holding equity in EditorAIPro, of which Pub2Post is a product. Dr Forte reported that his research at Mayo has been funded by Dalio Philanthropies, the Gerstner Family Foundation, the Richard M. Schulze Family Foundation, and Schmidt Sciences and that he is a paid medical advisor for OpenEvidence. No other disclosures were reported.